State

known, contested, scoped, actionable

Finding / Evidence / Frontier A scientific operating substrate for turning activity into state, state into tasks, and tasks into action.

A foundation has money for three experiments. A lab finished a failed run yesterday, but the result is still in an instrument export and a thread. A model proposes a trial design this morning against last month’s literature. By Friday, the experiments will be chosen before the failed run is absorbed, and the old assumption will buy another month of work.

That is the failure: the correction arrives after the decision.

Science already has most of the parts: instruments, papers, datasets, code, peer review, clinical records, AI agents, cloud labs, funders, and expert communities. What it lacks is the drivetrain that lets one piece change the next before another decision is made.

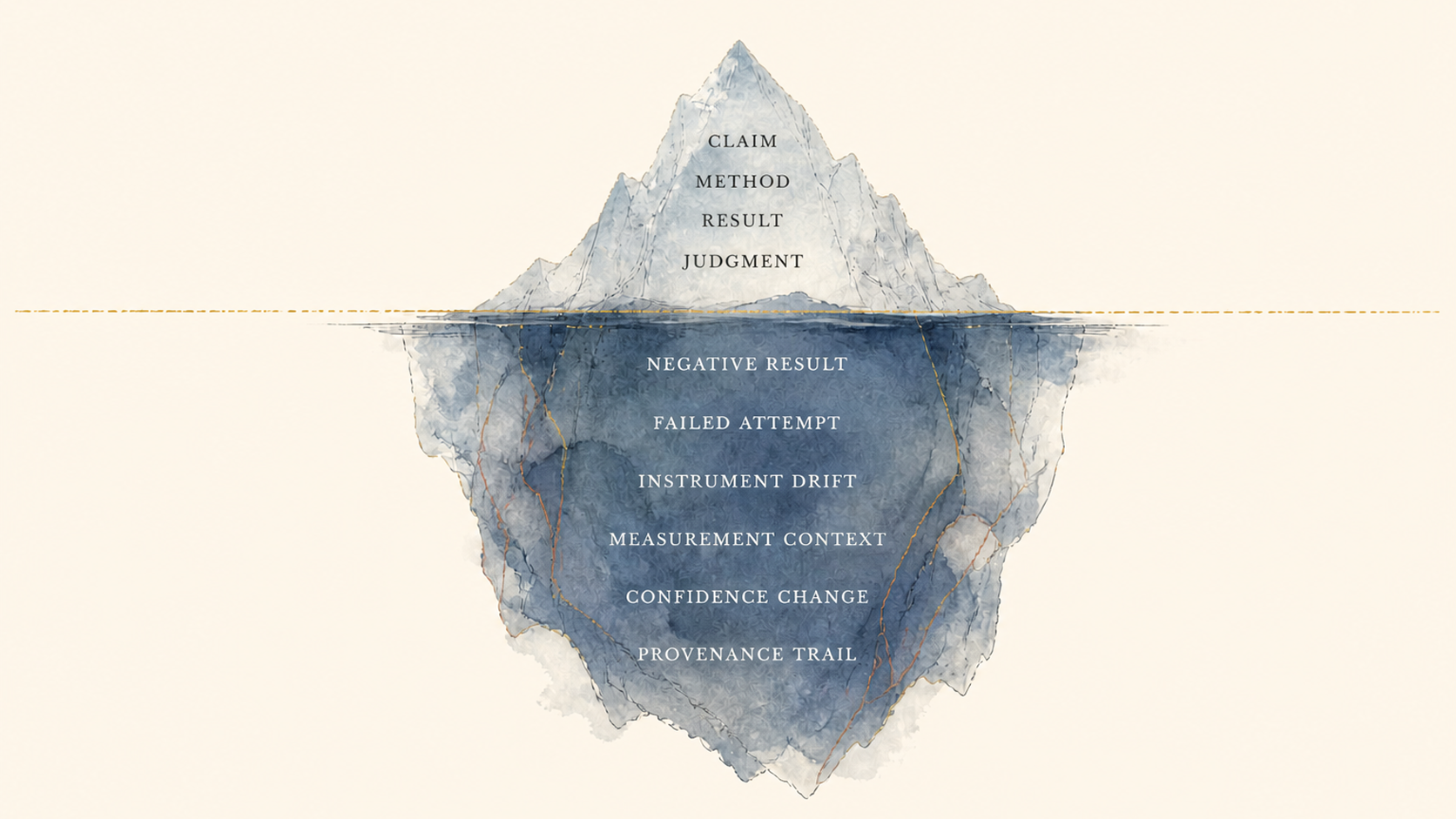

Those parts do not share the thing that matters most: the current state of the claim. Papers, datasets, lab traces, model outputs, review notes, funding decisions, and clinical observations all record activity in different places. The diagnosis sits beside older infrastructure efforts: FAIR data principles (Wilkinson et al., 2016), nanopublications (Groth et al., 2010), scientific workflow provenance (W3C PROV), and knowledge systems such as the Open Research Knowledge Graph. The point here is narrower: not better metadata alone, but a governed state transition.

The fragmentation reaches beyond tooling into epistemic state. A paper can tell you what an author claimed. It does not, by itself, tell the next system what changed, where the change applies, what confidence moved, what contradiction appeared, what depends on the claim, who attested it, and what should be tested next.

This is why a scientist still assembles the car by hand. She searches the literature in one place, checks data in another, reads code in a third, reconstructs methods from a supplement, asks a colleague whether a failure was real, opens a model chat that will not remember the correction tomorrow, and then writes a narrative artifact that another person has to reverse-engineer later. Wrong trial assumptions continue. Failed experiments repeat. Funders buy isolated reports. Patients wait while updates remain trapped in local memory. The system contains intelligence and labor. It does not contain a shared transition object.

The engine science needs is a procedure: every artifact should be able to propose a governed change to the shared record, and every accepted change should be able to guide the next task in time to matter.

One business analogy helps, but it is secondary. The useful analogy in Rippling is that workforce operations become more powerful when HR, IT, finance, payroll, identity, devices, permissions, workflows, and reporting can read and write shared employee state. The lesson is shared operational state: many surfaces acting on one underlying object.

Start from a week of use, not the object model. A foundation wants to know what to fund next. A student wants a real task at the frontier. A robotic lab has a failed run to deposit. A model makes a prediction that should either earn calibration or lose it. The engine exists so each of those ordinary actions lands in the same frontier instead of disappearing into four separate systems.

Science needs an equivalent shared object. For science, that object is the scientific state transition, not the person, paper, dataset, project, lab, agent, or grant around it.

Fig. 01. The trilogy. The public frame is Record, Engine, Body. In the essay-world, the same movement is Sky, Engine, Body: the shared record, the transition loop, and the physical infrastructure that acts on it.

Between those two arguments sits the engine. Constellations of Borrowed Light argues that science needs a shared record. The Terafactory Age asks whether the engine reaches the physical world as an open public body or as closed private bodies first. This page names the machinery between them.

The public structure is Record, Engine, Body: the record remembers, the engine moves, the body acts. Activity becomes state, state guides action, and action returns to the record.

The claim here is narrower and more mechanical than the vision around it: if scientific work is going to compound across humans, agents, world models, and labs, the basic operating unit has to change from an artifact to a governed state transition.

Think of the engine as an operating loop before you think of it as storage.

The loop starts with a goal: cure a disease, prove a theorem, build a better material, explain a climate signal, identify a safety risk, or decide which experiment deserves scarce lab time. The goal pressures the frontier. It determines which uncertainty matters enough to become work.

The goal becomes a frontier: what is known, what is unknown, what is contested, what depends on what, and which uncertainties are worth spending effort to reduce. A frontier becomes tasks. Tasks are assigned to humans, agents, models, reviewers, labs, funders, or institutions. Activity produces artifacts: papers, extractions, simulations, protocols, robot runs, code, clinical observations, field measurements, and reviews.

Activity still has to pass through the drivetrain before it becomes state.

The reference implementation can have many named surfaces, but the loop itself is simple. Preserve the artifact. Normalize what it says. Expose the proposed change. Decide whether it merges. Record the accepted transition. Render the frontier that changed. Everything else exists so that loop can be governed rather than merely executed.

A failed run shows the difference. The lab deposits its protocol trace and readout. The diff says a dependent claim should weaken in this cell line but not in the broader mechanism. A reviewer signs the narrow change, an event records it, and the next task queue stops assigning that experiment as if nothing had happened.

one BBB finding spans incompatible cohorts

APOE4+ split, replication request, model recalibration

frontier.rank BBB delivery discord rises diff.ready APOE4 subgroup split proposed event.commit Atlas update and task rerank Fig. 03. The engine loop. The operating unit is not the paper. It is a governed transition: work proposes a change, review decides whether it should merge, the event updates the Atlas, and the next task is selected from the new state.

This is where a knowledge graph runs out of surface. A graph can store relationships. It does not, by itself, decide what should change next, who can propose it, who can merge it, what evidence is sufficient, or what physical action follows. A graph is a map. An engine needs a clutch.

Agent runtimes have the same limit. Agents can produce activity. They can extract, summarize, design, critique, and execute. Without a governed transition layer, their work becomes more output for another agent to summarize later.

The test is practical. A better database helps a lab find what happened. A better notebook helps a lab preserve what happened. A better agent helps a lab produce more things that happen. The engine is different only if it changes the next decision: a trial pauses, a replication is routed, a model recalibrates, a funder stops buying a fragile assumption, or a lab avoids repeating a failure another lab already paid for.

The loop is the drivetrain. Every product in the ecosystem matters only insofar as it helps the loop run with more fidelity, more throughput, more legitimacy, or less waste.

The design pressure is that the loop must be mundane enough for ordinary work. A graduate student should be able to extract a method, an agent should be able to open a provenance audit, a reviewer should be able to sign a narrow correction, and a lab should be able to write back a failed run without turning the act into ceremonial publication. The engine is only real when the smallest transition can travel.

A discovery engine has four planes. They are a practical discipline rather than vocabulary for its own sake.

known, contested, scoped, actionable

Finding / Evidence / Frontier what should happen next

ResearchTask / Queue / SafetyGate what might happen if we act

Simulation / Prediction / Calibration what touched reality

Protocol / RobotRun / LabResult Fig. 04. Four planes. The architecture separates what is known, how work is coordinated, how outcomes are forecast, and what physically changes. The planes share one event spine, but each one has a different shape of work.

The state plane is what is currently known, unknown, contested, scoped, weakened, deprecated, and actionable. Its job is to make scientific state typed, replayable, attestable, and federated. If the state plane fails, the engine becomes another pile of activity.

The control plane coordinates work: what should happen next, who is allowed to do it, and what review is required. This is the lesson from modern software-agent orchestration: serious work needs tasks, isolated workspaces, review output, permissions, and boundaries, not one enormous conversation. OpenAI’s Symphony describes issue trackers, isolated agent workspaces, and human review as the organizing pattern for coding-agent work. The science version needs the same discipline, but with scientific state as the merge target.

The model plane predicts what might happen. Model outputs are powerful, but they do not become state by being generated. A prediction has to record which state it trained on, where it was validated, where it failed, and what evidence later contradicted it.

Fig. 05. Model calibration. The model plane needs its own record. Predictions become useful when they are coupled to the state they read from and recalibrated against the evidence that returns.

The action plane touches the world: experiments, robot runs, clinical observations, simulation jobs, field measurements, and funding decisions that actually change what gets done. This is where the engine meets bodies, instruments, patients, organisms, materials, weather, and capital.

The complete engine is the relationship among the four:

No plane can replace the others. A state plane without control is a museum. A control plane without state is a task manager. A model plane without state is a prediction market floating above reality. An action plane without writeback is the current lab system with better robots.

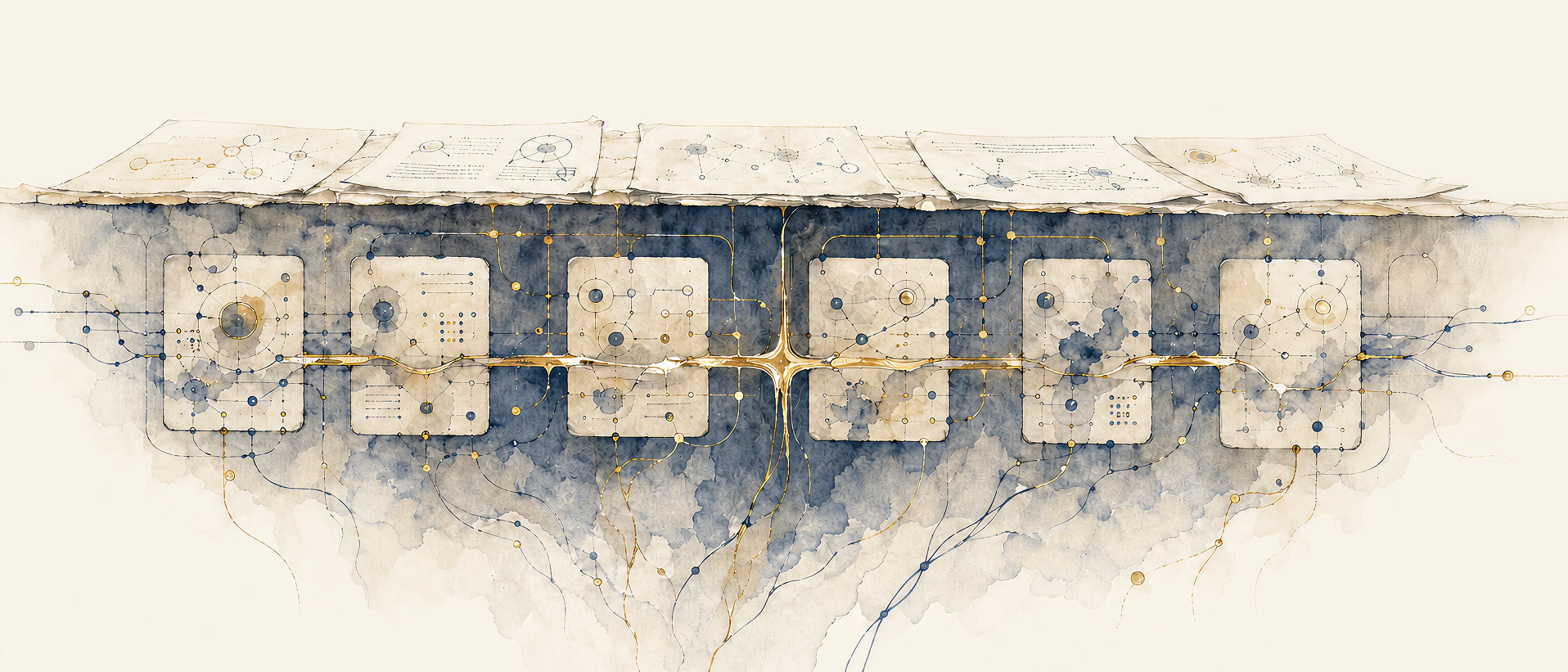

Do not start by imagining a suite. Start by imagining a Tuesday morning.

One person searches the frontier. Another reviews a correction. A lab writes back a failed run. A model records that its prediction lost calibration. A funder asks what should stop receiving money. These should not be five disconnected products. They should be five views over one record.

Specialized tools can exist without fragmenting the frontier when they share the substrate.

The first fundable unit is still narrow: one frontier where signed state transitions, a registry, and a review queue work together. The reference implementation can name the roles, but the names are handles, not the thesis. The thesis is that capture, review, action, and learning should point at the same transition record.

Search, review, lab orchestration, model calibration, education, funding, and safety products can compete as long as they preserve that record.

state cockpit control review forecast execution Fig. 06. Modular products on one substrate. The discovery engine is not a single app. Products can specialize at the surface because they share lower-level state, schemas, identity, replay, and attestation.

The build order should be narrower than the eventual ecosystem. First make state transitions real in one serious frontier. Then make review, frontier state, task routing, lab write-back, and model write-back read and write the same transition substrate.

The structural analogy becomes useful only at this level: many specialized products can remain separate if they read and write one underlying state. Science should copy that lesson while keeping the registry open.

The shared object lets the ecosystem remain plural. Different teams can build better search, better review surfaces, better lab orchestration, better education products, better safety gates, better model registries, and better funding markets. The products can compete while the state remains inheritable. That is the difference between an ecosystem and a suite.

The engine should be concrete enough that a user can ask it for work as well as knowledge.

A disease foundation says: “We want to make progress on neurovascular dysfunction in Alzheimer’s.”

The engine responds with the current frontier, the top unresolved questions, the highest-value discriminating experiments, the claims most fragile to provenance gaps, the agents already assigned, the expert reviews needed, the labs capable of execution, the safety class, the proposed diffs, and what changed this week. The foundation is no longer buying isolated papers or grant reports. It is buying movement in the frontier.

For a foundation, this changes the week. A $5 million program does not begin with a blank call for proposals. It begins with a portfolio frontier: three candidate experiments, two fragile assumptions, one lab-capacity constraint, one safety class, and a reviewer queue. If a failed replication weakens the broad claim on Wednesday, Friday’s funding decision should know.

A student says: “I want to help cure brain tumors.”

The engine routes her to a frontier, then to a task appropriate to her current competence: link evidence for this claim, compare these two papers, check this method, extract the protocol conditions, inspect why a replication failed, draft a proposal, learn from the review. The student does not begin with a textbook/test pathway detached from real work. She begins with a small edge of the frontier that has somewhere for her contribution to land.

A robotic lab says: “Experiment E completed.”

The engine captures the protocol trace, instrument calibration, raw data, measured outcome, uncertainty, and execution context. It creates an evidence object, computes the proposed change, routes it for attestation, updates the shared frontier if accepted, and updates the relevant model calibration records. The lab finishes a run by writing back.

Fig. 07. Instrument writeback. The lab surface should look like instrumentation, not project management. The useful object is the connection between plate position, signal trace, calibration, raw data, and the state transition it proposes.

A model says: “I predict intervention X will work.”

The engine converts the prediction into a counterfactual proposal, checks the context and assumptions, ranks expected information gain, applies the safety gate, routes to lab or simulation, and writes back the outcome. The prediction earns, loses, or keeps trust through its relationship to state, action, evidence, and calibration.

These scenarios are intentionally ordinary. Their value is mundane: the work of science stops being lost between systems.

Mon frontier gaps ranked

Tue evidence object improves

Wed claim scoped; model weakened

Thu Atlas state updates

Fri funding decision changes

Fig. 08. A week in the engine. The operating substrate matters because ordinary actions land in the same frontier. A grant question, student contribution, lab failure, review decision, and scheduler update become one governed week of state movement.

This is a post-v1 stress test. The first corridor may only have hundreds of deposits a month. The design still has to survive the moment generation outruns review.

Scaling to millions or billions of scientific agents cannot mean every agent talking to every other agent. The literal number is a stress test, not the premise. The engineering analogy is mature open-source governance and software-agent orchestration: broad proposal access, bounded workspaces, scarce maintainer review, CI, reputation, and merge authority. Science has a harsher version because a bad merge can move trials, lab work, funding, or safety decisions rather than code.

You scale with structure.

Frontiers become shards: pediatric high-grade glioma, blood-brain-barrier delivery, protein binder design, a disputed theorem, climate attribution, drought-tolerant cultivars, direct-air-capture sorbents. Agents operate inside frontiers, not in one global chat.

Tasks become the coordination primitive. Check an evidence span. Extract a method. Propose a discriminating experiment. Review a contradiction. Verify a proof. Audit a provenance chain. Draft a lab protocol. Inspect a model’s sim-to-real gap.

A task contract carries more than a prompt: objective, frontier, input state, required capability, workspace boundary, allowed tools, safety class, evidence standard, expected output, reviewer requirement, expiry, budget, and downstream dependencies. The scheduler can deduplicate near-identical tasks, lease work to qualified actors, expire stale tasks, retry failed work, archive dead ends, and route high-risk outputs to heavier review.

Fig. 09. Frontier sharding. At scale, agents do not enter one global room. They attach to frontiers, pick up bounded tasks, and route proposed changes through scarce merge authority.

Agents specialize. One is excellent at PubMed extraction. Another at Lean proofs. Another at protein design papers. Another at trial protocol critique. Another at statistical power checks. Another at lab safety. Another at reconciling contradictory clinical cohorts. Specialization matters because scientific work is a chain of jobs with different failure modes.

Every agent has a reliability record: accepted proposal rate, rejection reasons, later contradiction rate, citation hallucination rate, calibration, domain competence, review usefulness, and safety violations. The record does not have to be punitive to be essential. Reliability affects routing; routing produces work; review and later contradiction update reliability; reliability decays by domain and time. In a world of abundant generation, reliability is infrastructure.

Merge authority remains scarce. Millions of agents can propose. Few can merge.

The open-source law carries over:

Proposal access is broad.

Merge authority is governed.

At large scale, planning becomes hierarchical:

Markets and schedulers appear because scarce resources remain scarce: human review, lab time, funding, model recalibration, safety approval, clinical access, material transfer, regulatory attention. The engine does not abolish politics or allocation. It makes the allocation surface visible enough to govern.

Review capacity has to be engineered as deliberately as agent capacity. Low-risk transitions can be deduplicated, sampled, auto-rejected, or routed to narrow credential pools. Safety-relevant, clinical, animal, manufacturing, or high-dependency transitions require named human signers, conflict checks, escalation paths, and service-level expectations. A first corridor can be staffed like a serious study section rather than a social feed: two domain maintainers, one statistical reviewer, one provenance auditor, rotating external signers, and a weekly merge window with written rejection reasons. As a design stress test, a queue that receives ten thousand proposals a week but can merge two hundred is not failing if it discards nine thousand with auditable reasons and reserves scarce review for the transitions that move decisions.

The worst version of abundant agents produces too much low-legibility work for any institution to sort. The engine’s job is to make generation submit to structure: task boundaries, isolated workspaces, replayable evidence, calibration, review queues, signer reputation, safety classes, and merge authority. Agent spam, stale shards, poisoned evidence, model overconfidence, queue saturation, and reviewer capture are core design inputs for the control plane.

The bottleneck extends beyond intelligence into trustworthy integration.

Votes, comments, stars, citation counts, social attention, and agent output are signals. Trust enters when someone recognized for a domain, by a registry, under a revocable credential, signs a transition under rules that other institutions can inspect, contest, and inherit. The signature has scope, conflict metadata, expiration, appeal paths, and a registry that can itself be audited.

This makes governance a product requirement. Proposal access can be broad. Merge authority has to be governed. Identity, signer recognition, schema evolution, dispute handling, safety classification, maintainer turnover, and registry federation cannot be afterthoughts.

The institution can be small at first, but the rules cannot be vague, because the signature becomes downstream infrastructure.

The first operator should not be a company pretending to be a commons. It should be a chartered nonprofit registry or consortium for a bounded frontier. The governance test is simple: the registry can lose a dispute and survive, lose a founder and survive, lose a vendor and survive, and be forked if it violates its charter. The governance precedents are partial rather than exact: Crossref for nonprofit scholarly infrastructure across competing publishers, IETF for open protocol process, and W3C for web standards maintained across institutional actors.

The first host should be a patient-led foundation or FRO-hosted nonprofit registry with enough convening power to recruit three to five labs before the network effect exists. The pitch to each participant is practical: labs get milestone funding, shared negative-result protection, and a regulator-readable provenance export they could not produce alone. Funders get a weekly frontier export: what changed, what should stop, what should be tested, and which assumptions are now too fragile to buy.

The first pilot is an order-of-magnitude institutional proposal, not a claim about an existing program: one disease frontier, one chartered registry, one reviewer queue, negative-result writeback, one regulator-readable export, and a twelve-to-twenty-four-month success metric. These numbers are design assumptions for a serious pilot, not evidence that an existing program has committed. A plausible first budget is $5-to-$10 million for schema maintenance, reviewer time, conformance tests, export tooling, and lab integration. Labs receive milestone money only after signed failed-protocol deposits resolve.

Review capacity needs numbers from the beginning. A first queue can assume hundreds, not millions, of deposits per month: automatic duplicate clustering, rule-based rejection for malformed deposits, ten-to-twenty-minute provenance triage for low-risk transitions, and one-to-three-hour expert review for transitions that touch active funding, trials, animals, manufacturing, or safety. Reviewers are compensated, conflicts are logged, signer authority is scoped, appeals are rare but tracked, and the quality metric is downstream effect. The pilot passes only if at least one accepted correction changes a funding, review, lab, or regulator-facing decision that otherwise would have repeated the old assumption.

The regulator path is advisory first. Request an early scientific or regulatory advice meeting, show that the export preserves provenance and dependency movement, then let one IND, CMC, DSMB, IRB, or IACUC packet include it as supporting evidence without asking the agency to bless the protocol as canonical. FDA formal-meeting programs, including Type C and other advice meetings, provide the regulatory analogy: targeted questions, meeting packages, and early feedback without turning the supporting infrastructure itself into an approval decision. See FDA, Formal Meetings Between the FDA and Sponsors or Applicants of PDUFA Products.

Regulatory coupling should be explicit without pretending the engine approves anything. Some transitions are non-regulatory scientific state. Some are regulator-readable support for an IND, CMC package, real-world-evidence submission, DSMB review, IRB packet, or IACUC protocol. The registry makes provenance and dependency movement inspectable; the agency still decides under its own authority.

The capture point is often above the nominally open layer. Git itself remained open, but much of software collaboration moved into platform-owned issues, pull requests, Actions, review history, social graphs, and contribution reputation. The scientific analogue is sharper. A protocol can be open while the canonical registry of signers, reviewer reputation, lab capabilities, safety gates, and regulatory recognition is closed. Open code with a closed registry is captured infrastructure with a permissive license file.

rotated by foundation charter

forkable under audit failuredomain consortia issue credentials

regulators query registry statepublic, delayed, permissioned, excluded

classification is itself reviewableplural canonical views allowed

discord is represented as stateFig. 10. Registry as governance. The registry is a social object as much as a technical one. It decides which signatures, safety gates, credentials, and disputes other institutions can trust.

The Discovery Engine has to be open protocol first, reference implementation second, ecosystem third, SaaS fourth.

Commons governance needs several enforceable properties:

This is where the middle page connects back to both other essays. Constellations argues for maintainers, plural canonical views, and structural separation. Terafactory argues that the orchestration layer and identity registry above physical facilities are the capture points that decide whether the body stays open.

The Discovery Engine is the architecture that makes those claims operational. Without governance, the engine becomes a faster way to manufacture apparent consensus. With governance, disagreement itself can become state: not noise around the record, but part of the record.

The states have to be explicit: contested, minority view, scope split, replication failed, under appeal, safety held, and retracted. Each has a different effect on what can be shown, routed, funded, repeated, or merged.

The trilogy has one movement. First, the record: science needs a shared frontier where corrections, failures, dependencies, and evidence can compound. Then, the engine: activity has to become governed state before it can guide the next task. Finally, the body: state has to reach instruments, labs, clinical systems, factories, funders, and regulators before the future changes shape.

Record, Engine, Body names a sequence of pressure. What is known must be writable. What is writable must be reviewable. What is reviewed must be able to act.

At small scale, that means a reviewer opens a diff and sees exactly what would change.

At institutional scale, a lab deposits a failed run and the next lab does not repeat it. A model predicts against the state it will later be judged by. A foundation changes Friday’s funding decision because Wednesday’s correction arrived in time.

Across the next thousand years, the details change. The invariant remains: intelligence and experimentation can become abundant, but trustworthy state integration remains the bottleneck.

That is the engine’s test: the correction arrives before the experiment is bought, before the model is trusted, before the grant renews, before the patient-facing decision inherits the old assumption.